Perception

Perception is central for autonomously acting robots in that any action planning is based on the knowledge extracted from the sensory system.

Robots obtain information about their own state (posture, position in space, etc.) mainly via accelerometers, gyroscopes and joint angle sensors. The measured sensor information must be suitably merged and filtered. This allows the robot, on the one hand, to move in a controlled manner without losing its balance and, on the other hand, to appropriately interpret the information obtained from other sensor systems.

Information about the robot's environment is also extracted from distance sensors (sonar, infrared, laser, etc.), but mainly from camera systems. This must be done in real time, i.e. 20-30 times per second, to allow fluid tracking of fast movements.

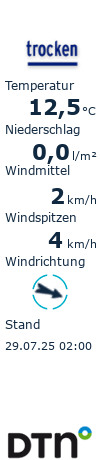

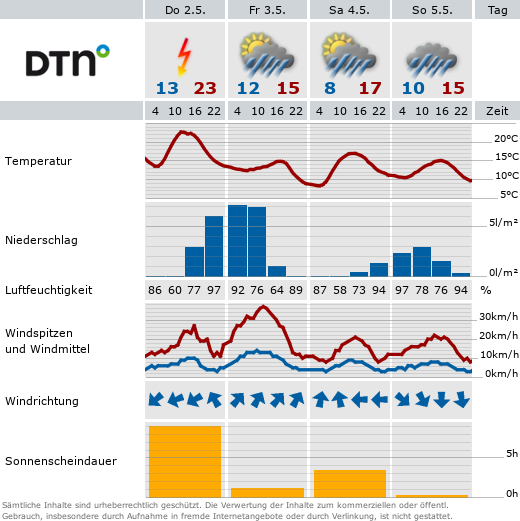

To make this possible on the comparatively low-computing CPUs of the standard robots, the color scheme of a robot soccer field is precisely specified. Originally, additional artificial landmarks were also placed around the field. From year to year, this specification is further softened and the challenge to the robots' perception systems is increased. In addition to the elimination of artificial landmarks and the approximation of the shape of the goals to real soccer goals, this is also a relaxation of the lighting situation.

Efficient image processing

The poor camera of the Aibo complicates image processing immensely. Robust color segmentation is essential here to extract information about relevant objects in real time. The camera of the Naos is generally of a higher quality, but the same restrictions apply here in principle, especially with regard to the very limited computing power available.

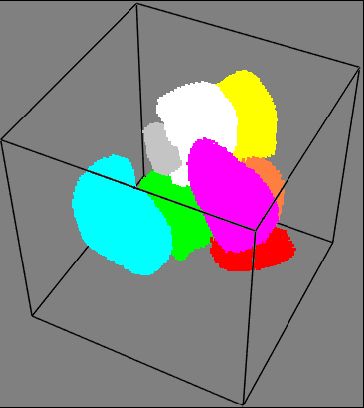

Generalization based on radiation models helps here to make color segmentation more robust against illumination variations.

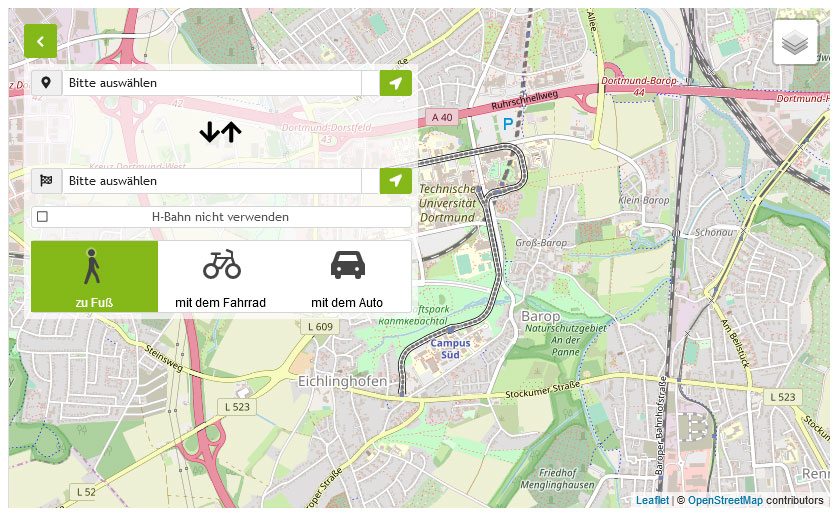

Sensor fusion offers another advantage. In the Standard Platform League, three to four robots play robot soccer in a team. The robots are able to communicate with each other via wireless Lan. This makes it possible for one of the robots to access not only its own sensor information but also that of the other robots, thus improving its own information about its environment.

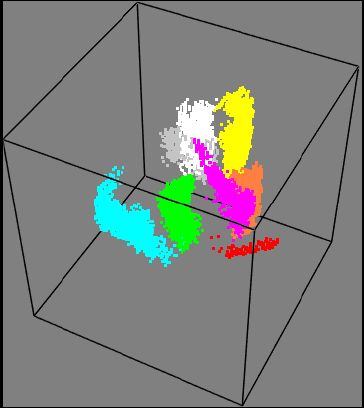

OmniVision

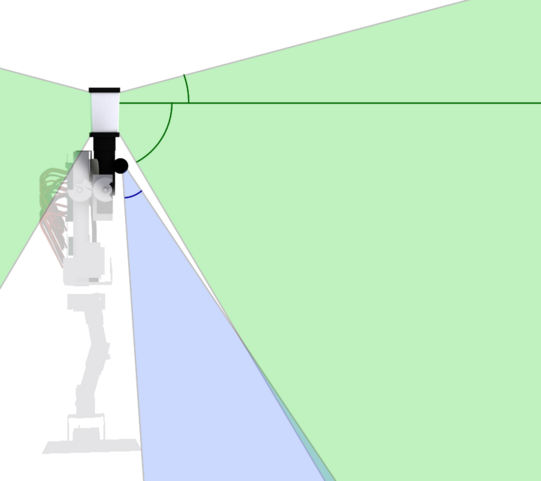

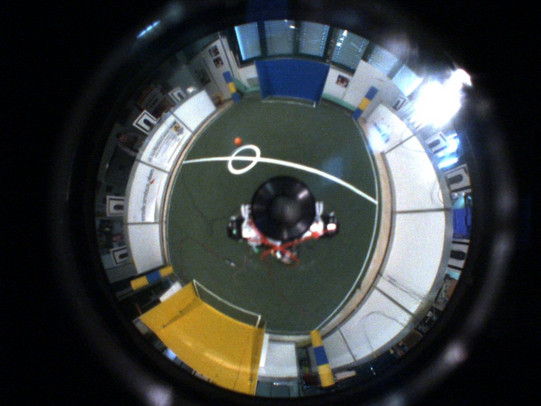

As a contrast to the very limited perceptual capabilities of the Aibo, we decided to use a catadioptric camera model (i.e., one that has both light-refracting and reflecting elements) in the design of the Bender humanoid robot. This gives the robot a nearly omnidirectional field of view. The blind spot directly in front of the robot can additionally be covered by a small directional camera.

Such a distorted image can be converted back into individual perspective views or even a panoramic view using suitable mathematical models of the imaging process.

Such a conversion, however, costs valuable computing time, so that knowledge of the geometry of the catadioptric image should be incorporated into its direct processing instead. Together with the current position estimate of the robot from joint angle data, inertial sensors and just the information extracted from the last image, the image can be processed extremely efficiently without prior conversion and information about all relevant visible objects can be extracted in real time.